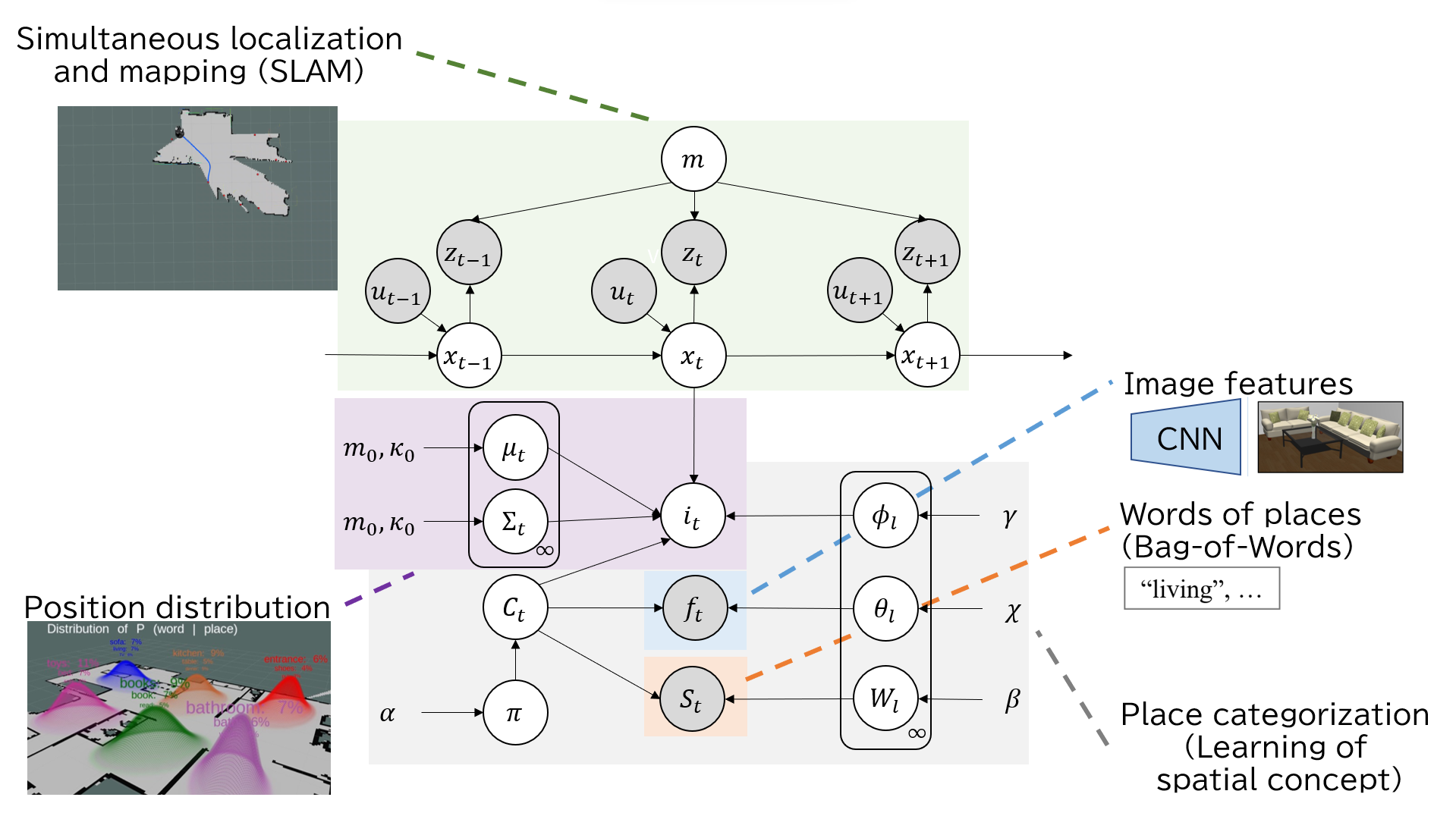

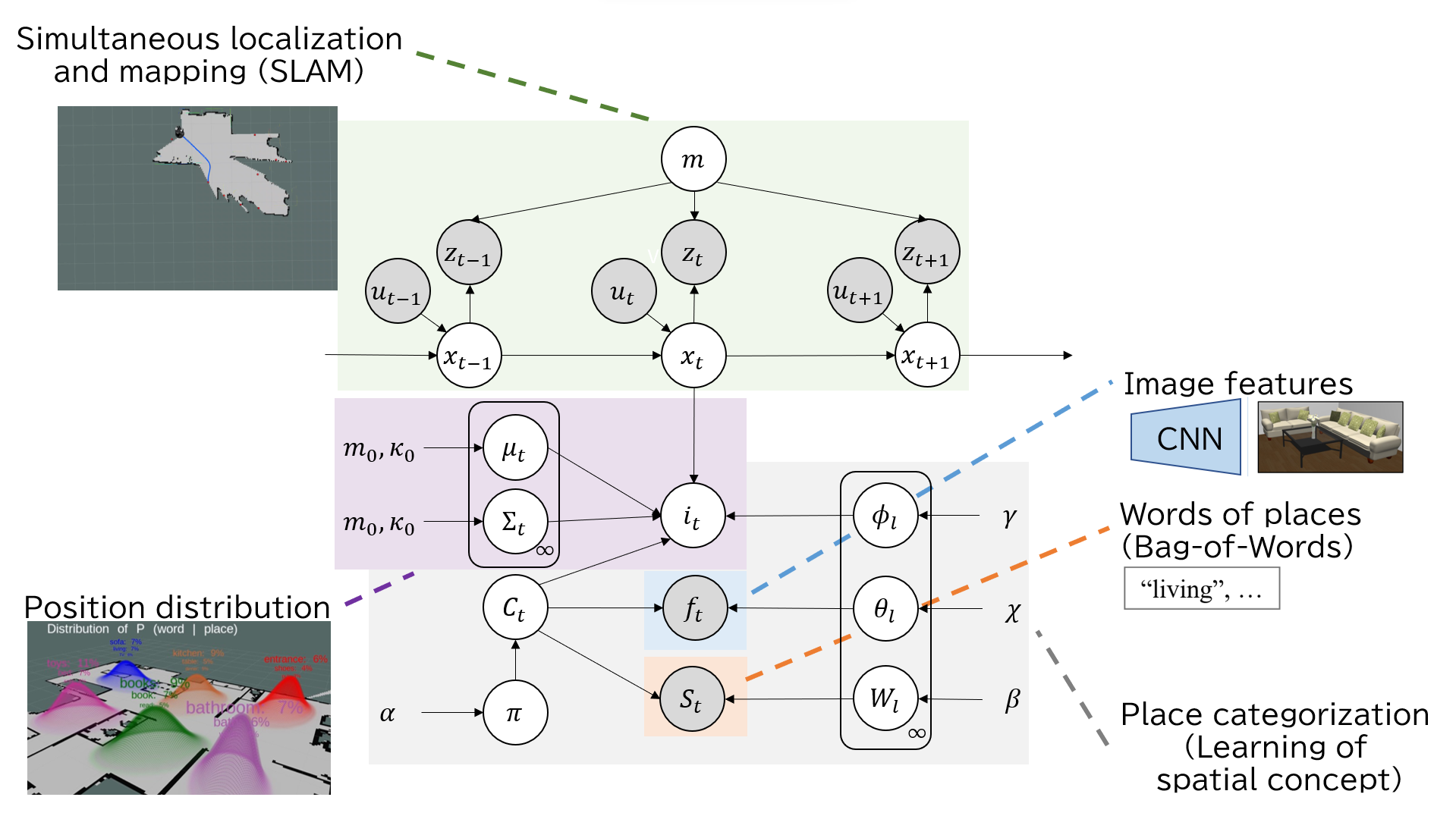

Graphical Model

Active semantic mapping is essential for service robots to quickly capture both the map of an environment and its spatial meaning, while also minimizing the burden on users during robot operation and data collection. SpCoSLAM, a method of semantic mapping with place categorization and simultaneous localization and mapping (SLAM), is well suited to environmental adaptation, as it is not limited to predefined labels. However, SpCoSLAM presents two issues that increase the burden on users: 1) users struggle to efficiently determine a destination for the robot’s quick adaptation, and 2) providing instructions to the robot becomes repetitive and cumbersome. To address these challenges, we propose Active-SpCoSLAM, which enables the robot to actively explore uncharted areas and employs CLIP for image captioning to provide a flexible vocabulary that replaces human instructions. The robot determines its actions by calculating information gain integrated from both semantics and SLAM uncertainties. We conducted experiments in a simulated environment, comparing the proposed method to other methods in terms of efficiency and applicability to object discovery tasks. Additionally, we tested the proposed method, which combines user instruction and CLIP, in a real environment. Our results demonstrated that the robot explored its environment with approximately five fewer iterations and 11 minutes faster compared to the case of random exploration. Moreover, our method achieved a higher success rate in object discovery tasks during earlier stages of learning compared to other methods. In conclusion, the proposed method rapidly covers an environment while gathering useful data for object discovery tasks, thus reducing the burden on users and enhancing the robot’s adaptability.

@inproceedings{ishikawa2023active,

title={Active semantic mapping for household robots: rapid indoor adaptation and reduced user burden},

author={Ishikawa, Tomochika and Taniguchi, Akira and Hagiwara, Yoshinobu and Taniguchi, Tadahiro},

booktitle={2023 IEEE International Conference on Systems, Man, and Cybernetics (SMC)},

pages={3116--3123},

year={2023},

organization={IEEE}

}

This work was supported by the Japan Science and Technology Agency (JST), Moonshot Research & Development Program (Grant Number JPMJMS2011), and the Japan Society for the Promotion of Science (JSPS), KAKENHI Grant Number JP20K19900, JP23K16975 and JP22K12212.